Archive for June, 2014

Big Science: Moonshots or Music?

The announcement in early June that the National Institutes of Health (NIH) was launching a 12 year 4.7 Billion dollar Initiative to study the brain caught my attention and spurred a bit of reflection about the NIH’s last big initiative – the Human Genome Project (HGP). We seem to be in an era of “Moonshot Science” and maybe it is time to ask how the clinical reality of the HGP has done compared to the original moonshot vision promulgated by its many advocates? Have we gotten to the clinical equivalent of the moon?

One of the main ideas behind the HGP was simple: if we know how the DNA based genetic code varies between people with and without certain diseases we will gain insight into the causes of disease. More importantly we can then screen for the genetic variants associated with diseases and offer pre-emptive and preventive interventions to those at greater risk. In some cases we might also be able to tailor drug therapy based on the individual’s genotype. For things like hypertension, heart disease, diabetes, obesity and some cancers this led to what has been termed the common-disease common-variant hypothesis.

At one level the HGP has been a massive test of the common-disease common-variant hypothesis. What are the results so far?

First, for most of the big killers in the developed world mentioned above, clear cut patterns of genetic risk have not emerged. Instead hundreds of genetic risk variants with very small effect sizes have been discovered and the distribution of these risky gene variants is the same in people with and without the disease of interest. Further, when information about these risky gene variants is plugged into risk prediction scoring systems commonly used by Drs, the predictive ability of the scoring systems do not get better. In fact for things like heart disease, diabetes, and hypertension simple knowledge of a patient’s height and waist size tells you far more about disease risk than genetics does.

Second, for common diseases the idea that we could get a gene test and then decide which drug is best for each patient is not working out as neatly as anticipated either. In fact for things like high cholesterol and high blood pressure the guidelines seem to be moving away from the idea that a specific drug might be picked for a specific patient based on genetics. For the commonly used blood thinner Warfarin, which can be tricky to dose, there was hope that information about the genetics of its metabolism would lead to better dosing algorithms, but unfortunately the clinical trials testing this idea failed.

Third, there is increasing evidence that it is difficult for both patients and providers to put information about genetic risk in context. Tell people they are at lower risk and some pay less attention to behavioral factors like diet and exercise that might help them prevent a variety of conditions. Tell them they are at increased risk and at least some get fatalistic and might also pay less attention to behavioral factors like diet and exercise that might help them prevent a variety of conditions. There is also evidence that the perception of increased risk can lead to more tests, biopsies and interventions which all cost money and at least some of them like preemptive surgery have their own set of risks.

Fourth, the HGP seems to have had the “side effect” of encouraging the development of animal models (mostly mice) where an engineered genetic variant leads to a predictable pattern of disease in the animals. This can then lead to the development of drugs that cure disease in animal models but fail clinical trials. One example is Alzheimer’s disease and the idea that it is all about amyloid. A number of anti-amyloid drugs work in the animal models designed to generate a buildup of amyloid in the brain, but twenty plus have failed clinical trials in humans. Additionally, there seems to be a disconnect between the reductionist ideas about amyloid as the cause of Alzheimer’s and the epidemiology data showing the major risk factors for Alzheimer’s include things like diabetes, hypertension, and physical inactivity. In some animal models, the disease of interest does not even show up if the animals are given access to minimal amounts of exercise.

So, based on the issues outlined above I would say some skepticism about moonshot biomedical science including the brain initiative is warranted. This skepticism also seems warranted because so many biomedical breakthroughs seem to be reverse examples of Yogi-Berra’s line about losing by making too many wrong mistakes. In medicine sometimes we win by making the right mistakes. A good example is the drugs that were designed to block the growth of blood vessels in tumors and “cure” cancer. Their effects on cancer have been modest but they have been vision saving in macular degeneration. Likewise drugs like Viagra started out as treatments for heart disease and their effects on erectile dysfunction were an unexpected and profitable surprise for the drug companies. The back story on Viagra is even more interesting because the fundamental observations ultimately responsible for the drug (which led to a Nobel Prize) were the result of a “mistake” made by a lab technician.

In the early 1970s the physiologist Julius Comroe and his anesthesiologist colleague Robert Dripps catalogued the 100 or so key discoveries needed to do then cutting-edge open heart surgery and concluded that about half of them happened by serendipity. Comroe and Dripps questioned the wisdom of too much goal directed big science just at the time the “war on cancer”, an even more dramatic metaphor than moonshot, was starting. As Gina Kolata reported in the Times in 2009, victory in that war is nowhere in sight.

However, there is hope. What the HGP has not revealed along with new ideas from the field evolutionary biology are leading to much more nuanced views of the role that DNA has in influencing the fate of animals including humans. These ideas are showing that DNA is not a simple read only code or program but that it operates in a way that can actually adapt to the environment. My colleague Denis Noble from Oxford has argued that the genetic code is in fact not a code at all but more like a musical keyboard that can be played by other parts of the body and even the environment, behavior and culture. So perhaps we need to stop thinking about biomedical problems as moonshots or wars and more like music. With that mindset maybe we can get to the “right mistakes” generated by all of this big science and find the clinical insights a little bit faster.

Dick Fosbury vs. Guidelines

Over the past couple of years I have argued that the current world wide obsession with “big data” and metrics is going to lead all sorts of people astray in many fields. The related idea is that every human activity can be turned into a quality improvement project with guidelines and check boxes that will reduce error and improve outcomes. Taken too far these twin beliefs are going to limit the sort of individual mastery and innovation needed to find novel solutions to our problems.

A couple of months ago I gave a presentation at Mayo where I highlighted the problems of too much standardization in medicine and the risk it poses to better patient care and innovation. I used parallels with the high jumpers Dick Fosbury and Debbie Brill who as teenagers in the 1960s invented the ‘flop’ and went over the bar backwards. In the current world would their efforts have been stifled by a compliance bureaucracy insisting they face the high jump bar while going over? What would the “approval process” for this new technique be in 2014? How many other barriers might be thrown up in the current world to stop them from moving high jumping forward by going backwards?

I explore these and related issues in the talk below. It is a long presentation about 40 minutes, but bear with me and I think you will enjoy the questions and observations raised in the talk.

How the Heat Lost to the Heat

The big news in the first game of the NBA finals was the air conditioning failure in San Antonio. This surely contributed to Lebron James cramping up and being unable to play the final four minutes of the game as the Spurs pulled away.

As the game wore on, ice packs and cold towels were used in an effort to keep the players cooler, and Lebron apparently changed his uniform at half-time to try to cool down. Might there have been a better strategy to deal with a warm and humid environment with a temperature that was apparently in the 90s at game time? The simple answer is yes.

When our core temperature increases a degree or two, the internal thermostat in our brains activates nerves to sweat gland and we start sweating. The internal thermostat also activates nerves that dilate the blood vessels in our skin and blood flow to our skin increases. If the sweat evaporates the skin stays cool and the blood flowing to the skin cools off. This evaporative cooling system lets the heat generated inside the body get out. When we exercise we produce more heat, and this heat transfer system is even more important.

So, the problems for Lebron and his colleagues included the heat they were producing while playing, the temperature in the building and the reduced ability of their sweat to evaporate due to the humidity. All of this then probably his lead to a viscous cycle of higher body temperatures, more sweating and more skin blood with the extra sweating not helping to cool the body but instead causing more fluid losses. Any extra skin blood flow might also have led to less blood flow for the player’s muscles.

So, what might have been done differently? First, wear less clothing. Any observer of the modern NBA can’t fail to notice the extra clothing and gear a lot of the players are wearing. All of this extra swag creates a microenvironment that makes it harder for the sweat to evaporate and cool the skin. So, if happens again Lebron, ditch the tights. Second, forget the ice and cool towels, they may feel good but if they are too cold they might actually reduce skin blood flow and make core temperature higher. Third, get some fans. The key to the evaporative cooling system I described above is evaporating the sweat. My bet is that there were high capacity fans somewhere in the arena and the best strategy would have been to have players take their shirts off on the bench while the fans created the airflow needed to evaporate their sweat and keep them cool and ultimately in the game.

California Chrome Goes For It

Last year prior to the Belmont Stakes I did a post on the great race horse Secretariat who won the Triple Crown in 1973 with the most impressive athletic performance I have ever seen or heard of. This weekend if California Chrome can win the Belmont Stakes, he will be the first horse since 1978 to win the Triple Crown.

Humans vs. Horses

Horses in general and thoroughbred horses in specific are remarkable running machines. Secretariat covered 1.5 miles (about 2413 meters) in the Belmont in 2 minutes and 24 seconds and won by about 30 lengths. When I use a race conversion calculator to estimate how fast a human could run 1.5 miles, here is what I come up with using current world records for men from 800 to 5000 meters and rounding to the second.

Distance Time Estimate for 1.5 miles

800 m 1:40.91 5:25

1000 m 2:11.96 5:35

1500 m 3:26.00 5:41

Mile 3:43.13 5:42

2000 m 4:44.79 5:47

3000 m 7:20.67 5:50

5000 m 12:37.35 5:49

The average of the above estimates is 5:41 meaning that Secretariat covered the distance about 2.4 times as fast as a world class human middle distance runner might.

Maximal Oxygen Uptake

For both humans and horses running really fast for a few minutes requires a high maximal oxygen uptake. This means that the heart has to be able to pump a large amount of blood to the muscles where the oxygen is used to help power the running. The lungs then have to be able to transfer oxygen to the blood as it returns from the muscles. In elite human middle distance runners of 60-70 kgs, the heart can pump somewhere between 30 and 40 liters every minute, and it is not unusual to see such athletes breathing 150 to 200 liters per minute. In these athletes maximal oxygen uptake can be about 80 ml/kg/minute or between about 4.5 and 6 liters per minute when you don’t divide by body weight.

What About Horses?

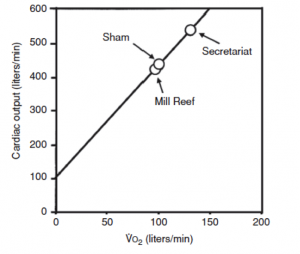

My colleague David Poole at Kansas State University has done some incredible studies of exercise capacity in race horses. The graph below is from a terrific scientific review article he did with his colleague Howard Erickson on oxygen transport in horses and dogs. The graph shows the estimated cardiac output and oxygen uptake (VO2) in some notable horses. These horses weigh about 500kg so they are about seven or eight times bigger than elite human runners. When you take the numbers off the graph Secretariat had an estimated oxygen uptake of about 130 liters per minute! This value is about 20 times greater than that seen in an elite human runner and means that on a body weight basis it was something like 260 ml/kg/minute — or more than three times the values seen in elite humans. It is also one of the major explanations of why he could run 2.4 times faster than a human. His estimated cardiac output was about 550 liters per minute. For those of you who think in terms of gallons that is almost 150 gallons per minute! Breathing volumes in the range of 2-3000 liters per minute are also possible in these great animals.

When You Watch

When you watch the race this Saturday the horses will be running about 50% faster than Usain Bolt and 24 times as far. The corporate sponsors of the race aside, the race is really being brought to you by Mother Nature, selective breeding and the physiology of oxygen consumption all working together at the outer limits of biology.

You are currently browsing the Human Limits blog archives for June, 2014.